Hi there, I’m Rashmeet!

I’m a Ph.D. candidate in Computer Science at Arizona State University finishing my dissertation on context-aware abstractions for generalizable long-horizon sequential decision-making. My work sits at the intersection of reinforcement learning, planning, and generative AI, specifically, how agents can learn structured representations that let them reason and act reliably across long-horizon tasks.

I’ve had the chance to work on problems at real scale. At Amazon Air, I designed and trained an attention-based foundation model for large-scale vehicle routing, using LLM-inspired supervised and RL fine-tuning to produce real-time decisions under operational constraints. At LinkedIn, I worked on offline RL for LLM-based task-oriented agents. Before that, I built an AI system for analyzing UV spectra from Hubble Space Telescope using probabilistic logic, work that earned a Chambliss Astronomy Achievement Award from the American Astronomical Society.

More recently I’ve been working on skill learning for LLM agents and contributing to diffusion-guided task and motion planning for robotics. The common thread across all of it is the same question: how do we build agents that generalize in long-horizon settings?

Finishing my PhD in early 2026 and open to Applied Scientist/ML Engineer/Research Scientist roles in GenAI agents, decision-making systems, and embodied AI.

Interests

- Reinforcement Learning and Planning

- Learning Abstractions

- Generalization and Transfer

- Robotics

Education

Ph.D. in Computer Science, 2020 - present

Arizona State University

M.S. in Computer Science, 2018 - 2020

Arizona State University

B.E. in Information Technology, 2013 - 2017

Pune Institute of Computer Technology

Publications

Awards & Press

Chambliss Astronomy Achievement Student Award

American Astronomical Society awards ASU students Chambliss medals

Rashmeet Kaur Nayyar receives Chambliss medal from American Astronomical Society

Projects

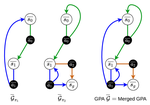

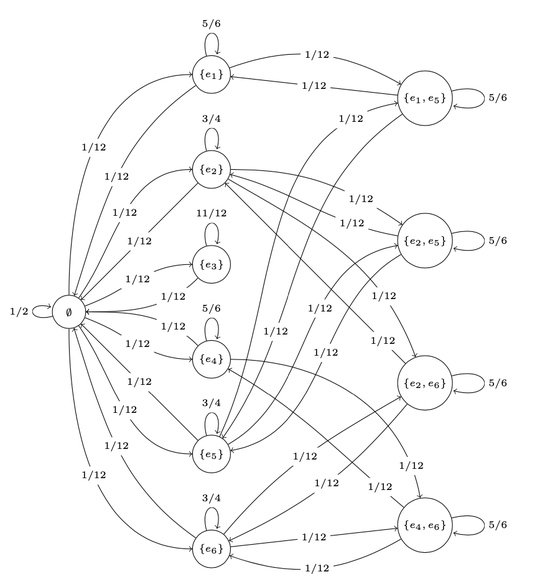

Perfect Observability is a Myth - Restraining Bolts in the Real World

Developed a framework for imposing constraints on an AI agent in a world with nosiy observations. poster attached

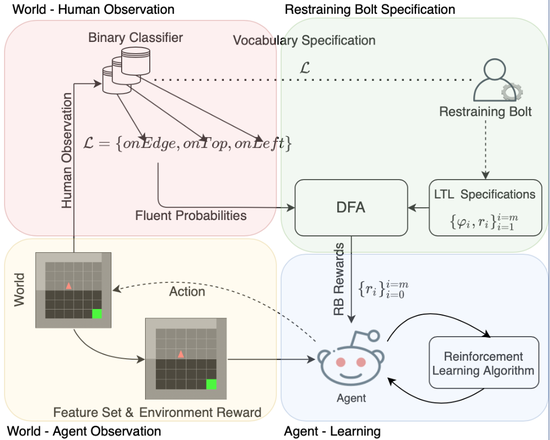

Card Shuffling using Markov chains

Evaluated overhand, top-to-random, Knuth, transposition, thorp, and riffle card shuffling techniques. presentation attached

Vision-based Manipulator movement with Fetch

Implemented a visual-feedback based method to guide the Fetch mobile manipulator’s end-effector to reach the target object without using AR-markers. video attached

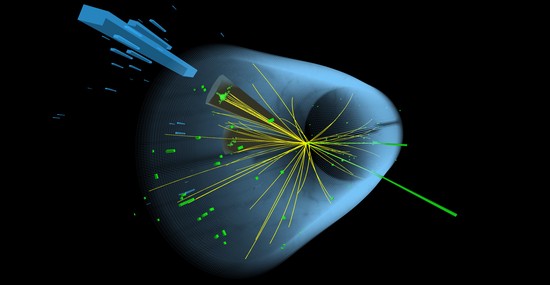

Higgs Boson Particle discovery

Performed exploratory data analysis, and compared classification of ATLAS experiment events using advanced machine learning techniques such as XGBoost and neural networks.

AI Pacman Agent

Comprehensive implementation of AI methods such as DFS, BFS, UCS, A* search, minimax, expectimax, and alpha-beta pruning to create Pacman in a multi-agent environment using Python.

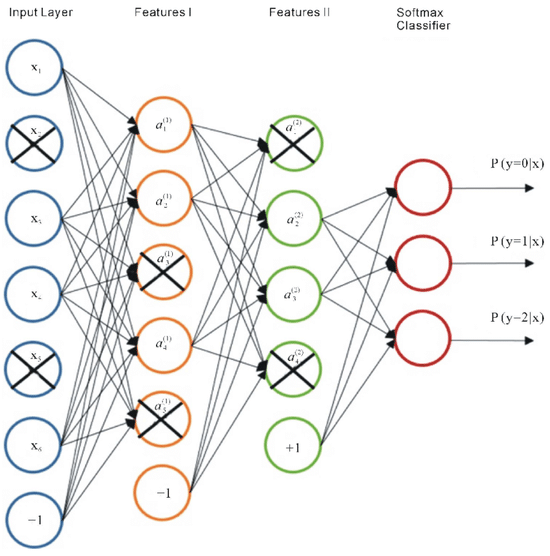

Denoising and Stacked Autoencoders

Built & evaluated denoising capabilities of a denoising autoencoder with different levels of noise. Trained a stacked autoencoder layer-by-layer in an unsupervised fashion, & fine-tuned the network with the classifier.